Homelab Infrastructure Overview (2026)

My earlier post on the Red Hat oVirt cluster captured a specific point in time in the evolution of my home platform. Since then, the environment has changed substantially. What started as a traditional homelab has become something much closer to a small enterprise infrastructure platform: virtualisation, segmented networking, shared storage, Kubernetes, automation, GPU workloads, and a mixture of personal, development, and real hosted services.

This post is a high-level overview of where the platform stands in 2026.

Virtualisation Platform

The current platform is built on a Proxmox VE 8.x cluster named cluster-alpha. Proxmox replaced oVirt as the primary virtualisation layer and has proven to be a practical fit for the way I now use the environment. It gives me a straightforward operational model for mixed infrastructure, while still supporting the capabilities I care about most: clustering, live migration, shared storage integration, and a clean way to manage both VMs and containers.

The cluster hosts a broad mix of workloads. Some are classic infrastructure services, some are workstation-style systems, some are Kubernetes nodes, and some are GPU-backed systems used for heavier compute or remote desktop/streaming scenarios. VM live migration is enabled, which is important both operationally and for maintenance flexibility.

At this point, the virtualisation layer is not there just to support experimentation. It underpins a platform that carries real services and needs to behave predictably.

Hardware Layout

At a hardware level, the environment is now a mix of older utility systems and denser modern hosts.

Compute and infrastructure nodes:

alpha- 16 vCPUs, 48 GB RAMbeta- 32 vCPUs (dual Xeon), 64 GB RAM, GeForce 1660, Tesla P4, RTX 3090gamma- 96 vCPUs (dual Xeon), 256 GB RAM, NVIDIA A5000delta- Dell PowerEdge R410 used primarily for Proxmox Backup Server, with IPMI power scheduling for weekly backup windows

Shared storage:

nas1- modern shared storage system / SAN node, Intel Core i3-8100, 28 GB DDR4, providing the main NFS-backed storage tiers for the cluster

Kubernetes Platform

Kubernetes has become a central part of the environment rather than a side project. I currently run multiple K3s clusters, hosted inside Proxmox VMs, with separate roles for management, primary workloads, and test environments.

The current cluster estate includes:

- a management cluster (

mgmt1) - a primary workload cluster (

alpha) - a secondary test cluster (

beta)

That split gives me room to keep core platform functions separated from day-to-day experimentation. It also lets me test changes in something closer to a realistic operational model rather than treating everything as a single flat cluster.

The Kubernetes side of the platform is where I spend a lot of time exploring platform engineering patterns: GitOps, CI/CD-driven deployments, observability tooling, distributed services, and operational workflows that look more like enterprise delivery than a typical home setup.

K3s is still the current platform, but the likely future direction is RKE2. The main reason is alignment with more enterprise-oriented Kubernetes environments while still keeping the operational model manageable. K3s has been extremely useful, but RKE2 is the path I am most likely to take as the platform matures further.

That move would not just be a Kubernetes distribution change. It would also be part of a wider cleanup of the cluster networking and availability model. At a high level, the direction is toward KubeVIP replacing HAProxy for control-plane availability, and Cilium, which I already use with K3s, taking on a more complete role for security policy and cluster networking. That would also allow me to simplify how service load balancing is handled. I am deliberately keeping that description high level in a public post, but the overall direction is toward a cleaner and more integrated platform design.

Storage Architecture

The platform still relies on shared storage exported from a NAS layer. That shared storage is what makes migration across Proxmox nodes straightforward and keeps the virtualisation platform flexible.

There are multiple NFS-backed pools serving different purposes, including:

- premium NVME SSD pools for high-performance VM storage

- encrypted NVME SSD pools for more sensitive workloads

- standard hybrid HDD (NVME SSD slog) Z2 pools for general-purpose storage

- mirrored hyrbrid HDD (NVME SSD slog) pools for bulk and resilient storage

- dedicated backup storage for VM protection

The shared storage layer is really closer to a SAN platform than a simple NAS appliance at this point. In broad terms, I currently run NVMe-backed tiers for higher-performance workloads and roughly 80 TB of usable HDD-backed capacity for bulk and resilient storage. I also have SSD-to-HDD replication in place, which gives me a better balance between performance and data protection without forcing every workload into the same storage profile.

This model continues to work well, especially because it supports live migration and lets me place workloads on storage tiers that match their requirements.

That said, shared NAS-backed storage is not the final destination. The next major area I want to explore is hyperconverged storage, most likely via Ceph on the Proxmox side, Rook-Ceph in Kubernetes, or both. The existing design is stable and practical, but the long-term direction is clearly toward a more integrated storage model.

Within Kubernetes, persistent volumes are currently provided mainly by OpenEBS, with some workloads also using the NFS CSI provisioner. That is largely a consequence of the underlying shared-storage design. It works, but it is also one of the clearest places where future storage evolution could simplify the stack.

Networking

The network architecture is intentionally closer to a small enterprise topology than a simple homelab. That has been a deliberate design choice for a long time, and it has become more important as the platform has grown.

The backbone is built around 10Gb networking, LACP bonded interfaces, VLAN-aware Proxmox bridges, and fairly heavy network segmentation. Rather than treating the environment as one trusted flat network, I split traffic and workloads into distinct domains for management, storage, Kubernetes, bastion access, home services, guest access, IoT, development/test workloads, and client-style simulation networks.

Internally, that includes VLAN segments such as:

- home

- management

- private development

- IoT

- guest

- trusted workstation

- managed client

- VM management

- VoIP

- K3s alpha

- K3s management

- K3s beta

- edge

- home security

- SAN

- storage

- NAS

That level of segmentation is useful not just for tidiness, but for modelling operational boundaries, traffic separation, and failure domains in a way that better reflects real infrastructure environments.

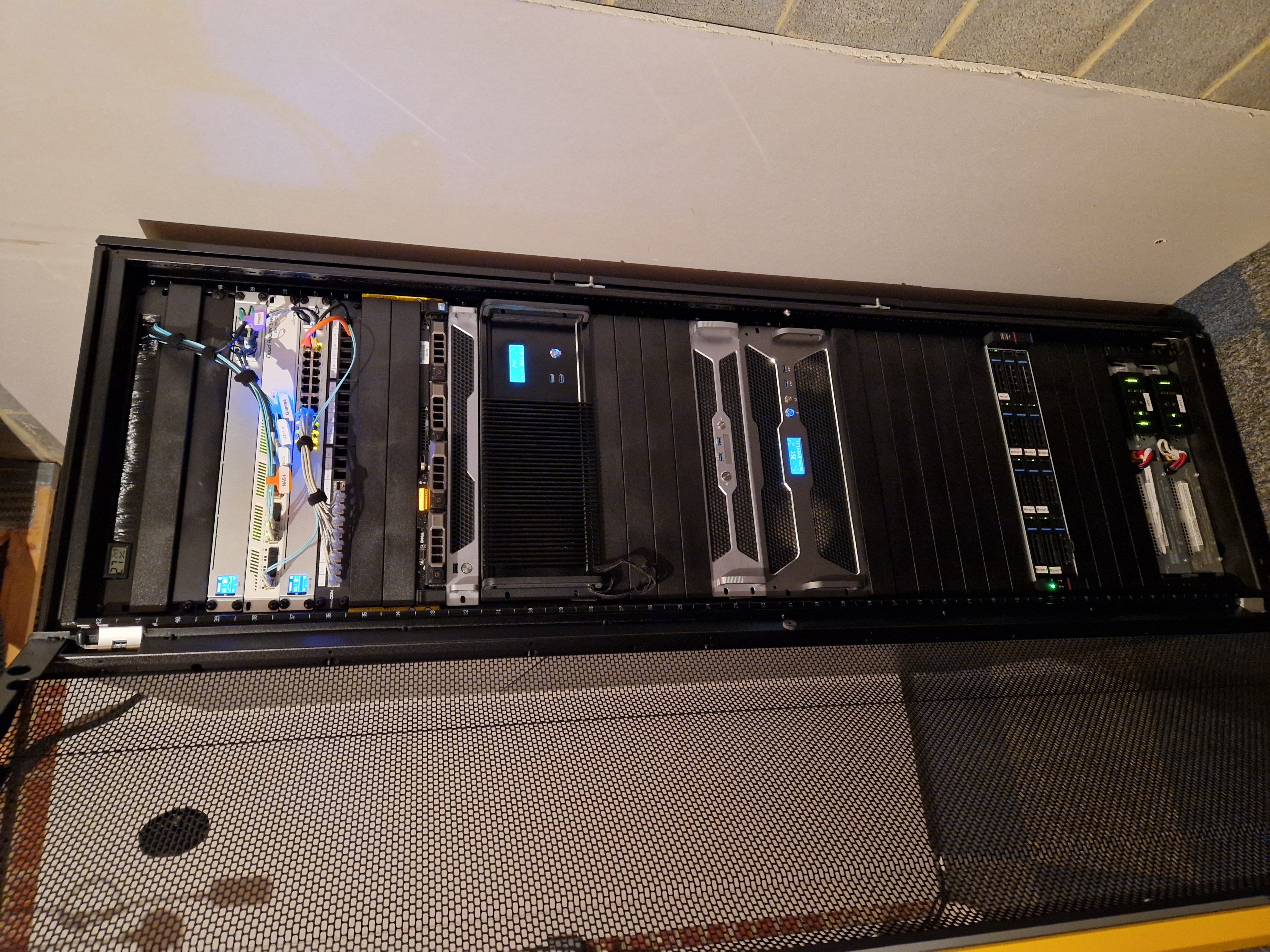

Physically, the network is split between the garage rack and the house cabinet. The garage holds the heavier compute and storage side of the environment, while the house cabinet contains the firewall, core switching, wireless infrastructure, and local access edge.

Power resilience has also improved over time. The platform now has two 1500W UPS units rather than a single unit, and the wider environment is backed by a 16-panel solar installation split across the house and garage. That does not remove the need for sensible power planning, but it does make the whole setup feel much closer to an infrastructure platform than a loose collection of lab hardware.

Workloads

This environment is no longer just a lab built for curiosity. It hosts real workloads.

That includes:

- internal infrastructure services

- development environments

- Kubernetes-hosted services

- personal infrastructure services

- workloads for my family engineering company

- small client websites and hosted services

- GPU-enabled workstations

- game streaming workloads using vGPU slicing

- local AI experimentation

That mix is a big part of why the platform has evolved the way it has. The design has had to move beyond interesting technology and toward something that is operationally useful. Reliability, segmentation, automation, and repeatability matter much more once the platform is carrying services people actually depend on.

The GPU side has grown as well. Some systems are used for remote workstation use cases, some for streaming-style workloads, and some for local AI and LLM experimentation. I am intentionally keeping that part high level here, but local inference, development tooling, and AI-assisted workflows are now a meaningful part of the platform’s role.

Automation and CI/CD

Automation is now central to how I operate the environment.

Git-driven workflows, CI/CD pipelines, containerised deployment patterns, Kubernetes manifests, and Helm all play a part. Increasingly, I prefer changes to be expressed in code and moved through repeatable pipelines rather than handled manually.

This blog and CV site are an example of that same philosophy. They are now generated with Hugo and deployed through CI/CD pipelines rather than maintained as a runtime CMS. That shift mirrors the broader direction of the platform: simpler runtimes, immutable artifacts, reproducible builds, and clearer separation between source and deployment.

The Kubernetes side of the environment is where that automation-first approach becomes most visible, but the same thinking now applies across virtualisation, networking, application hosting, and platform operations.

Future Direction

The main areas I expect to keep evolving are fairly clear.

On the virtualisation side, Proxmox is firmly established as the current platform. On the Kubernetes side, K3s remains the present-day choice, but RKE2 is the likely next step, together with a simpler and more integrated control-plane and networking model centred on KubeVIP and Cilium. On the storage side, shared NAS-backed storage continues to work, but hyperconverged storage is the most obvious future direction, particularly through Ceph or Rook-Ceph.

More broadly, I expect the platform to keep moving in the same direction it has already been heading:

- closer to enterprise operational patterns

- more automation-driven

- more Kubernetes-centric

- more reproducible and Git-managed

- more capable of hosting real services rather than just experiments

That is really the key change from the earlier oVirt-era platform. The technology has changed, but the bigger difference is the role the environment now plays. It has become a serious infrastructure platform in its own right.